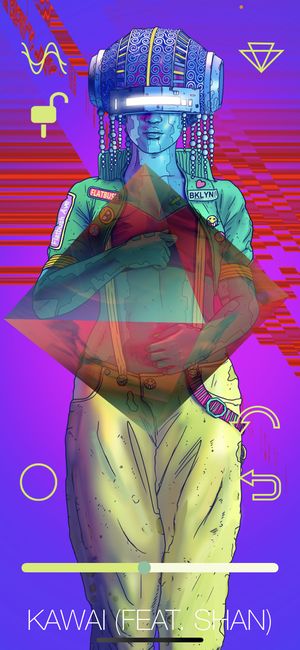

Curate Alpha

I have been on & off working on this project from 2016 to 2022. This app is written in Objective-C & Swift for iOS. This a project conceived by the artist MeLo-X. with Lou Auguste. Lou is the show runner / producer.

The original app was created by another developer Kyle Truscott. The app displays a 3D scene showing a 3D model; the SceneKit framework was used for this.

You interact with the UI by dragging with one of more fingers on the "gem" to make real-time audio effects changing the audio output as audio is playing.

When changing the playing song, the shape would rotate to a new position to indicate a different track was playing.

Originally when I joined the project, I was asked to work on the following features & enhancements:

- add more real-time audio effects using the Superpowered SDK

- add support for Spotify using the iOS SDK

- add a YouTube section video section - this is descoped for now

- changes to the app to be able to download content from the cloud.

- save & export mixes

- add inter-app audio support using Audiobus - we were assisted on this by the very talented creative technologist Peter Courtemanche

- using inter-app audio, the output could be routed to apps like Garage Band

There was already some real-time effects associated with gestures in the app. I added a new scene for debugging to test the effects; Melo-X actually quite liked this change & we made it part of the app release after giving it some design attention.

I asked a colleague Craig Shaynak, who is an audio expert, to take over some of the audio development work as it is quite specialized knowledge.

The Spotify integration got de-scoped for two reasons:

- the Spotify SDK at the time of summer 2016, was not ip6 compliant; the app would have been rejected by Apple if it was using that version of the Spotify SDK

- manipulating the Spotify streaming audio required getting approval from Spotify